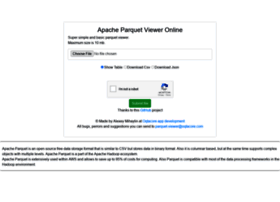

Write diagnostic logs under the given directoryĭiagnostic log level. Whether to write diagnostic logs to an output panel A simple native UWP viewer for Apache Parquet files (.parquet) based on the great. Use the legacy parquet-tools application for reading the files The name of the parquet-tools executable or a path to the parquet-tools jar The following setting options are available: name Parquet file is column-oriented file, it can not view normalize be like json, csv, tsv.

If you still want to use parquet-tools, you should set eParquetTools to true and paruqet-tools should be in your PATH, or pointed by the parquet-viewer.parquetToolsPath setting. Now the extension uses the parquets TypeScript library to do parse the files. The extension used to require parquet-tools. When opening a Parquet file, a JSON presentation of the file will open automatically:Īfter closing the JSON view, it is possible to reopen it by clicking on the link in the parquet view. Hope you liked it and, do comment in the comment section.Views Apache Parquet files as JSON. Also explained how to do partitions on parquet files to improve performance. We have learned how to write a Parquet file from a PySpark DataFrame and reading parquet file to DataFrame and created view/tables to execute SQL queries. ParDF1.createOrReplaceTempView("parquetTable") |firstname|middlename|lastname| dob|salary|Ĭomplete Example of PySpark read and write Parquet file Spark.sql("SELECT * FROM PERSON2" ).show() Spark.sql("CREATE TEMPORARY VIEW PERSON2 USING parquet OPTIONS (path \"/tmp/output/people2.parquet/gender=F\")") Here, I am creating a table on partitioned parquet file and executing a query that executes faster than the table without partition, hence improving the performance. |firstname|middlename|lastname|dob |salary|Ĭreating a table on Partitioned Parquet file Output for the above example is shown below. ParDF2=("/tmp/output/people2.parquet/gender=M") The example below explains of reading partitioned parquet file into DataFrame with gender=M. Retrieving from a partitioned Parquet file

When you check the people2.parquet file, it has two partitions “gender” followed by “salary” inside. Following is the example of partitionBy().ĭf.write.partitionBy("gender","salary").mode("overwrite").parquet("/tmp/output/people2.parquet") In PySpark, we can improve query execution in an optimized way by doing partitions on the data using pyspark partitionBy() method. This is similar to the traditional database query execution. When we execute a particular query on the PERSON table, it scan’s through all the rows and returns the results back. |firstname|middlename|lastname| dob|gender|salary| Here, we created a temporary view PERSON from “ people.parquet” file. Spark.sql("CREATE TEMPORARY VIEW PERSON USING parquet OPTIONS (path \"/tmp/output/people.parquet\")") In order to execute sql queries, create a temporary view or table directly on the parquet file instead of creating from DataFrame. Now let’s walk through executing SQL queries on parquet file. ParkSQL = spark.sql("select * from ParquetTable where salary >= 4000 ") ParqDF.createOrReplaceTempView("ParquetTable") These views are available until your program exists. Pyspark Sql provides to create temporary views on parquet files for executing sql queries. Incase to overwrite use overwrite save mode.ĭf.write.mode('append').parquet("/tmp/output/people.parquet")ĭf.write.mode('overwrite').parquet("/tmp/output/people.parquet") was added to Apache NiFi, you would have had to split the file line by line. Using append save mode, you can append a dataframe to an existing parquet file. a Parquet Record reader to read incoming Parquet formatted files. ParDF=("/tmp/output/people.parquet")Īppend or Overwrite an existing Parquet file Below is an example of a reading parquet file to data frame. Pyspark provides a parquet() method in DataFrameReader class to read the parquet file into dataframe. Df.write.parquet("/tmp/output/people.parquet")

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed